要用非常少的数据来训练一个图像分类的模型是一种在实务上常见的情况,如果您过去曾经进行过影像视觉处理的相关专案,这样的情况势必常常遇到。

训练用样本很”少”可以意味着从几百到几万个图像(视不同的应用与场景)。作为一个示范的案例,我们将集中在将图像分类为“狗”或“猫”,数据集中包含4000张猫和狗照片(2000只猫,2000只狗)。我们将使用2000张图片进行训练,1000张用于验证,最后1000张用于测试。

我们将回顾一个解决这种问题的基本策略:从零开始,我们提供了少量数据来训练一个新的模型。我们将首先在我们的2000个训练样本上简单地训练一个小型卷积网络(convnets)来做为未来优化调整的基准,在这过程中没有任何正规化(regularization)的手法或配置。

这样的模型将使我们的分类准确率达到71%左右。在这个阶段,我们的主要问题将是过拟合(overfitting)。然后,我们将介绍数据扩充(data augmentation),这是一种用于减轻电脑视觉演算过度拟合(overfitting)的强大武器。通过利用数据扩充(data augmentation),我们将改进网络,并提升准确率达到82%。

在另一个文章中,我们将探索另外两种将深度学习应用到小数据集的基本技术: 使用预训练的网络模型来进行特征提取(这将使我们达到90%至93%的准确率),调整一个预先训练的网络模型(这将使我们达到95%的最终准确率)。总而言之,以下三个策略:

- 从头开始训练一个小型模型

- 使用预先训练的模型进行特征提取

- 微调预先训练的模型

将构成您未来的工具箱,用于解决计算机视觉运算应用到小数据集的问题上。

#这个Jupyter Notebook的环境

import platform

import tensorflow

import keras

print ( "Platform: {} " . format ( platform . platform ()))

print ( "Tensorflow version: {} " . format ( tensorflow . __version__ ))

print ( " Keras version: {} " . format ( keras . __version__ ))

% matplotlib inline

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

import numpy as np

from IPython.display import Image

Using TensorFlow backend.

Platform: Windows-10-10.0.15063-SP0

Tensorflow version: 1.4.0

Keras version: 2.0.9

资料集说明

我们将使用的数据集(猫与狗的图片集)没有被包装Keras包装发布, 因此要自行另外下载。Kaggle.com在2013年底提供了这些数据来作为电脑视觉竞赛题目。您可以从以下连结下载原始数据集:https://www.kaggle.com/c/dogs-vs-cats/data

图片是中等解析度的彩色JPEG档。他们看起来像这样:

该原始数据集包含25,000张狗和猫的图像(每个类别12,500个),大小为543MB(压缩)。下载和解压缩后,我们将创建一个包含三个子集的新数据集:一组包含每个类的1000个样本的训练集,每组500个样本的验证集,最后一个包含每个类的500个样本的测试集。

资料准备

- 从Kaggle点击Download下载图像资料档training.zip。

- 在这个Jupyter Notebook所在的目录下产生一个新的子目录”data”。

- 把从kaggle下载的资料档复制到”data”的目录里头。

- 将train.zip解压缩

最后你的目录结构看起来像这样:

xx-yyy.ipynb

data/

└── train/

├── cat.0.jpg

├── cat.1.jpg

├── ..

└── dog.12499.jpg

import os

#专案的根目录路径

ROOT_DIR = os . getcwd ()

#置放coco图像资料与标注资料的目录

DATA_PATH = os . path . join ( ROOT_DIR , "data" )

import os , shutil

#原始数据集的路径

original_dataset_dir = os . path . join ( DATA_PATH , "train" )

#存储小数据集的目录

base_dir = os . path . join ( DATA_PATH , "cats_and_dogs_small" )

if not os . path . exists ( base_dir ):

os . mkdir ( base_dir )

#我们的训练资料的目录

train_dir = os . path . join ( base_dir , 'train' )

if not os . path . exists ( train_dir ):

os . mkdir ( train_dir )

#我们的验证资料的目录

validation_dir = os . path . join ( base_dir , 'validation' )

if not os . path . exists ( validation_dir ):

os . mkdir ( validation_dir )

#我们的测试资料的目录

test_dir = os . path . join ( base_dir , 'test' )

if not os . path . exists ( test_dir ):

os . mkdir ( test_dir )

#猫的图片的训练资料目录

train_cats_dir = os . path . join ( train_dir , 'cats' )

if not os . path . exists ( train_cats_dir ):

os . mkdir ( train_cats_dir )

#狗的图片的训练资料目录

train_dogs_dir = os . path . join ( train_dir , 'dogs' )

if not os . path . exists ( train_dogs_dir ):

os . mkdir ( train_dogs_dir )

#猫的图片的验证资料目录

validation_cats_dir = os . path . join ( validation_dir , 'cats' )

if not os . path . exists ( validation_cats_dir ):

os . mkdir ( validation_cats_dir )

#狗的图片的验证资料目录

validation_dogs_dir = os . path . join ( validation_dir , 'dogs' )

if not os . path . exists ( validation_dogs_dir ):

os . mkdir ( validation_dogs_dir )

#猫的图片的测试资料目录

test_cats_dir = os . path . join ( test_dir , 'cats' )

if not os . path . exists ( test_cats_dir ):

os . mkdir ( test_cats_dir )

#狗的图片的测试资料目录

test_dogs_dir = os . path . join ( test_dir , 'dogs' )

if not os . path . exists ( test_dogs_dir ):

os . mkdir ( test_dogs_dir )

#复制前1000个猫的图片到train_cats_dir

fnames = [ 'cat. {} .jpg' . format ( i ) for i in range ( 1000 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( train_cats_dir , fname )

if not os .path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy first 1000 cat images to train_cats_dir complete!' )

#复制下500个猫的图片到validation_cats_dir

fnames = [ 'cat. {} .jpg' . format ( i ) for i in range ( 1000 , 1500 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( validation_cats_dir , fname )

if not os . path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy next 500 cat images to validation_cats_dir complete!' )

#复制下500个猫的图片到test_cats_dir

fnames = [ 'cat. {} .jpg' . format ( i ) for i in range ( 1500 , 2000 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( test_cats_dir , fname )

if not os . path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy next 500 cat images to test_cats_dir complete!' )

#复制前1000个狗的图片到train_dogs_dir

fnames = [ 'dog. {} .jpg' . format ( i ) for i in range ( 1000 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( train_dogs_dir , fname )

if not os .path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy first 1000 dog images to train_dogs_dir complete!' )

#复制下500个狗的图片到validation_dogs_dir

fnames = [ 'dog. {} .jpg' . format ( i ) for i in range ( 1000 , 1500 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( validation_dogs_dir , fname )

if not os . path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy next 500 dog images to validation_dogs_dir complete!' )

# C复制下500个狗的图片到test_dogs_dir

fnames = [ 'dog. {} .jpg' . format ( i ) for i in range ( 1500 , 2000 )]

for fname in fnames :

src = os . path . join ( original_dataset_dir , fname )

dst = os . path . join ( test_dogs_dir , fname )

if not os . path . exists ( dst ):

shutil . copyfile ( src , dst )

print ( 'Copy next 500 dog images to test_dogs_dir complete!' )

Copy first 1000 cat images to train_cats_dir complete!

Copy next 500 cat images to validation_cats_dir complete!

Copy next 500 cat images to test_cats_dir complete!

Copy first 1000 dog images to train_dogs_dir complete!

Copy next 500 dog images to validation_dogs_dir complete!

Copy next 500 dog images to test_dogs_dir complete!

作为一个健康检查,让我们计算每次训练分组中有多少张照片(训练/验证/测试):

print ( 'total training cat images:' , len ( os . listdir ( train_cats_dir )))

print ( 'total training dog images:' , len ( os . listdir ( train_dogs_dir )))

print ( 'total validation cat images:' , len ( os . listdir ( validation_cats_dir )))

print ( 'total validation dog images:' , len ( os . listdir (validation_dogs_dir )))

print ( 'total test cat images:' , len ( os . listdir ( test_cats_dir )))

print ( 'total test dog images:' , len ( os . listdir ( test_dogs_dir )))

total training cat images: 1000

total training dog images: 1000

total validation cat images: 500

total validation dog images: 500

total test cat images: 500

total test dog images: 500

所以我们确实有2000个训练图像,然后是1000个验证图像和1000个测试图像。在每个资料分割(split)中,每个分类都有相同数量的样本:这是一个平衡的二元分类问题,这意味着分类准确度将成为适当的度量。

资料预处理(Data Preprocessing)

如现在已经知道的那样,数据应该被格式化成适当的预处理浮点张量,然后才能喂进我们的神经网络。目前,我们的数据是在档案目里里的JPEG影像文件,所以进入我们网络的前处理步骤大概是:

- 读进图像档案。

- 将JPEG内容解码为RGB网格的像素。

- 将其转换为浮点张量。

- 将像素值(0和255之间)重新缩放到[0,1]间隔(如您所知,神经网络更喜欢处理小的输入值)。

这可能看起来有点令人生畏,但感谢Keras有一些工具程序可自动处理这些步骤。Keras有一个图像处理助手工具的模块,位于keras.preprocessing.image。其中的ImageDataGenerator类别,可以快速的自动将磁盘上的图像文件转换成张量(tensors)。我们将在这里使用这个工具。

from keras.preprocessing.image import ImageDataGenerator

#所有的图像将重新被进行归一化处理Rescaled by 1./255

train_datagen = ImageDataGenerator ( rescale = 1. / 255 )

test_datagen = ImageDataGenerator ( rescale = 1. / 255 )

#直接从档案目录读取图像档资料

train_generator = train_datagen . flow_from_directory (

#这是图像资料的目录

train_dir ,

#所有的图像大小会被转换成150x150

target_size = ( 150 , 150 ),

#每次产生20图像的批次资料

batch_size = 20 ,

#由于这是一个二元分类问题, y的lable值也会被转换成二元的标签

class_mode = 'binary' )

#直接从档案目录读取图像档资料

validation_generator = test_datagen . flow_from_directory (

validation_dir ,

target_size = ( 150 , 150 ),

batch_size = 20 ,

class_mode = 'binary' )

Found 2000 images belonging to 2 classes.

Found 1000 images belonging to 2 classes.

我们来看看这些图像张量产生器(generator)的输出:它产生150×150 RGB图像(形状“(20,150,150,3)”)和二进制标签(形状“(20,)”)的批次张量。20是每个批次中的样品数(批次大小)。请注意,产生器可以无限制地产生这些批次:因为它只是持续地循环遍历目标文件夹中存在的图像。因此,我们需要在某些时候break迭代循环。

for data_batch , labels_batch in train_generator :

print ( 'data batch shape:' , data_batch . shape )

print ( 'labels batch shape:' , labels_batch . shape )

break

data batch shape: (20, 150, 150, 3)

labels batch shape: (20,)

让我们将模型与使用图像张量产生器的数据进行训练。我们使用fit_generator方法。

因为数据是可以无休止地持续生成,所以图像张量产生器需要知道在一个训练循环(epoch)要从图像张量产生器中抽取多少个资料。这是steps_per_epoch参数的作用:在从生成器中跑过steps_per_epoch批次之后,即在运行steps_per_epoch梯度下降步骤之后,训练过程将转到下一个循环(epoch)。在我们的情况下,批次是20个样本,所以它需要100次,直到我们的模型读进了2000个目标样本。

当使用fit_generator时,可以传递一个validation_data参数,就像fit方法一样。重要的是,这个参数被允许作为数据生成器本身,但它也可以是一个Numpy数组的元组。如果您将生成器传递为validation_data,那么这个生成器有望不断生成一批验证数据,因此您还应该指定validation_steps参数,该参数告诉进程从验证生成器中抽取多少批次以进行评估。

网络模型(Model)

我们的卷积网络(convnets)将是一组交替的Conv2D(具有relu激活)和MaxPooling2D层。我们从大小150×150(有点任意选择)的输入开始,我们最终得到了尺寸为7×7的Flatten层之前的特征图。

注意,特征图的深度在网络中逐渐增加(从32到128),而特征图的大小正在减少(从148×148到7×7)。这是一个你将在几乎所有的卷积网络(convnets)建构中会看到的模式。

由于我们正在处理二元分类问题,所以我们用一个神经元(一个大小为1的密集層(Dense))和一个sigmoid激活函数来结束网络。该神经元将会被用来查看图像归属于那一类或另一类的机率。

from keras import layers

from keras import models

from keras.utils import plot_model

model = models . Sequential ()

model . add ( layers . Conv2D ( 32 , ( 3 , 3 ), activation = 'relu' ,

input_shape = ( 150 , 150 , 3 )))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Conv2D( 64 , ( 3 , 3 ), activation = 'relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Conv2D ( 128 , ( 3 , 3 ), activation = ' relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2)))

model . add ( layers . Conv2D ( 128 , ( 3 , 3 ), activation = 'relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Flatten () )

model . add ( layers . Dense ( 512 , activation = 'relu'))

model . add ( layers . Dense ( 1 , activation = 'sigmoid' ))

我们来看看特征图的尺寸如何随着每个连续的图层而改变:

#打印网络结构

model . summary ()

Layer (type) Output Shape Param #

================================================== ===============

conv2d_1 (Conv2D) (None, 148, 148, 32) 896

max_pooling2d_1 (MaxPooling2 (None, 74, 74, 32) 0

conv2d_2 (Conv2D) (None, 72, 72, 64) 18496

max_pooling2d_2 (MaxPooling2 (None, 36, 36, 64) 0

conv2d_3 (Conv2D) (None, 34, 34, 128) 73856

max_pooling2d_3 (MaxPooling2 (None, 17, 17, 128) 0

conv2d_4 (Conv2D) (None, 15, 15, 128) 147584

max_pooling2d_4 (MaxPooling2 (None, 7, 7, 128) 0

flatten_1 (Flatten) (None, 6272) 0

dense_1 (Dense) (None, 512) 3211776

dense_2 (Dense) (None, 1) 513

================================================== ===============

Total params: 3,453,121

Trainable params: 3,453,121

Non-trainable params: 0

在我们的编译步骤里,我们使用RMSprop优化器。由于我们用一个单一的神经元( Sigmoid的激活函数)结束了我们的网络,我们将使用二進制交叉熵(binary crossentropy)作为我们的损失函数。

from keras import optimizers

model . compile ( loss = 'binary_crossentropy' ,

optimizer = optimizers . RMSprop ( lr = 1e-4 ),

metrics = [ 'acc' ])

训练(Training)

history = model . fit_generator (

train_generator ,

steps_per_epoch = 100 ,

epochs = 30 ,

validation_data = validation_generator ,

validation_steps = 50 )

Epoch 1/30

100/100 [==============================] – 11s 112ms/step – loss: 0.6955 – acc: 0.5295 – val_loss : 0.6809 – val_acc: 0.5340

Epoch 2/30

100/100 [==============================] – 8s 78ms/step – loss: 0.6572 – acc: 0.6135 – val_loss : 0.6676 – val_acc: 0.5710

Epoch 3/30

100/100 [==============================] – 8s 76ms/step – loss: 0.6214 – acc: 0.6590 – val_loss : 0.6408 – val_acc: 0.6290

Epoch 4/30

100/100 [==============================] – 8s 77ms/step – loss: 0.5882 – acc: 0.6920 – val_loss : 0.6296 – val_acc: 0.6440

Epoch 5/30

100/100 [==============================] – 8s 77ms/step – loss: 0.5517 – acc: 0.7130 – val_loss : 0.7241 – val_acc: 0.5950

Epoch 6/30

100/100 [==============================] – 8s 76ms/step – loss: 0.5108 – acc: 0.7530 – val_loss : 0.5785 – val_acc: 0.7010

Epoch 7/30

100/100 [==============================] – 8s 78ms/step – loss: 0.4899 – acc: 0.7680 – val_loss : 0.5620 – val_acc: 0.7060

Epoch 8/30

100/100 [==============================] – 8s 76ms/step – loss: 0.4575 – acc: 0.7810 – val_loss : 0.5803 – val_acc: 0.6970

Epoch 9/30

100/100 [==============================] – 8s 76ms/step – loss: 0.4193 – acc: 0.8070 – val_loss : 0.5881 – val_acc: 0.7120

Epoch 10/30

100/100 [==============================] – 8s 75ms/step – loss: 0.3869 – acc: 0.8195 – val_loss : 0.5986 – val_acc: 0.7050

Epoch 11/30

100/100 [==============================] – 8s 75ms/step – loss: 0.3620 – acc: 0.8355 – val_loss : 0.6368 – val_acc: 0.7090

Epoch 12/30

100/100 [==============================] – 8s 76ms/step – loss: 0.3434 – acc: 0.8480 – val_loss : 0.6214 – val_acc: 0.6970

Epoch 13/30

100/100 [==============================] – 8s 75ms/step – loss: 0.3165 – acc: 0.8670 – val_loss : 0.6897 – val_acc: 0.7010

Epoch 14/30

100/100 [==============================] – 8s 76ms/step – loss: 0.2878 – acc: 0.8755 – val_loss : 0.6249 – val_acc: 0.7100

Epoch 15/30

100/100 [==============================] – 8s 77ms/step – loss: 0.2650 – acc: 0.8975 – val_loss : 0.6438 – val_acc: 0.7060

Epoch 16/30

100/100 [==============================] – 8s 76ms/step – loss: 0.2362 – acc: 0.9090 – val_loss : 0.7780 – val_acc: 0.6920

Epoch 17/30

100/100 [==============================] – 8s 76ms/step – loss: 0.2098 – acc: 0.9165 – val_loss : 0.8215 – val_acc: 0.6750

Epoch 18/30

100/100 [==============================] – 8s 76ms/step – loss: 0.1862 – acc: 0.9305 – val_loss : 0.7044 – val_acc: 0.7120

Epoch 19/30

100/100 [==============================] – 8s 75ms/step – loss: 0.1669 – acc: 0.9425 – val_loss : 0.7941 – val_acc: 0.6990

Epoch 20/30

100/100 [==============================] – 8s 75ms/step – loss: 0.1522 – acc: 0.9475 – val_loss : 0.8285 – val_acc: 0.6960

Epoch 21/30

100/100 [==============================] – 8s 75ms/step – loss: 0.1254 – acc: 0.9575 – val_loss : 0.8199 – val_acc: 0.7070

Epoch 22/30

100/100 [==============================] – 8s 78ms/step – loss: 0.1117 – acc: 0.9620 – val_loss : 0.9325 – val_acc: 0.7090

Epoch 23/30

100/100 [==============================] – 8s 76ms/step – loss: 0.0907 – acc: 0.9750 – val_loss : 0.8740 – val_acc: 0.7220

Epoch 24/30

100/100 [==============================] – 8s 75ms/step – loss: 0.0806 – acc: 0.9755 – val_loss : 1.0178 – val_acc: 0.6900

Epoch 25/30

100/100 [==============================] – 8s 75ms/step – loss: 0.0602 – acc: 0.9815 – val_loss : 0.9158 – val_acc: 0.7260

Epoch 26/30

100/100 [==============================] – 8s 76ms/step – loss: 0.0591 – acc: 0.9810 – val_loss : 1.1284 – val_acc: 0.7030

Epoch 27/30

100/100 [==============================] – 8s 75ms/step – loss: 0.0511 – acc: 0.9820 – val_loss : 1.1136 – val_acc: 0.7140

Epoch 28/30

100/100 [==============================] – 8s 76ms/step – loss: 0.0335 – acc: 0.9930 – val_loss : 1.4372 – val_acc: 0.6820

Epoch 29/30

100/100 [==============================] – 8s 75ms/step – loss: 0.0409 – acc: 0.9860 – val_loss : 1.2121 – val_acc: 0.6930

Epoch 30/30

100/100 [==============================] – 8s 76ms/step – loss: 0.0271 – acc: 0.9920 – val_loss : 1.3055 – val_acc: 0.7010

训练完后就把模型保存是个好习惯:

model.save ( 'cats_and_dogs_small_2.h5' )

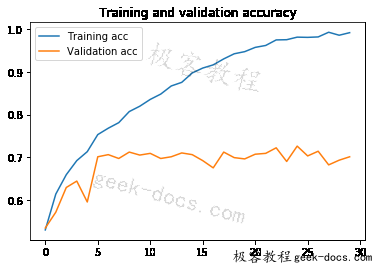

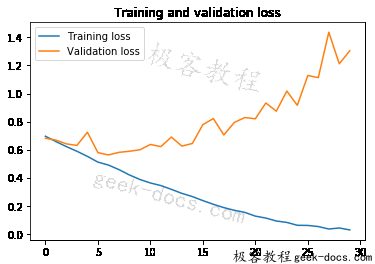

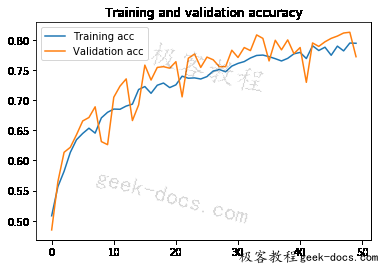

让我们使用图表来秀出在训练过程中模型对训练和验证数据的损失(loss)和准确性(accuracy)数据:

import matplotlib.pyplot as plt

acc = history . history [ 'acc' ]

val_acc = history . history [ 'val_acc' ]

loss = history . history [ 'loss' ]

val_loss = history . history [ 'val_loss' ]

epochs = range ( len ( acc ))

plt . plot ( epochs , acc , label = 'Training acc' )

plt . plot ( epochs , val_acc , label = 'Validation acc' )

plt . title ( 'Training and validation accuracy' )

plt . legend ()

plt . figure ()

plt . plot ( epochs , loss , label = 'Training loss' )

plt . plot ( epochs , val_loss , label = 'Validation loss' )

plt . title ( 'Training and validation loss' )

plt . legend ()

plt . show ()

这些图表显示了过度拟合(overfitting)的特征。我们的训练精确度随着时间线性增长,直到接近100%,然而我们的验证精确度却停在70 ~ 72%。我们的验证损失在第五个循环(epochs)之后达到最小值,然后停顿,而训练损失在线性上保持直到达到接近0。

因为我们只有相对较少的训练数据(2000笔),过度拟合(overfitting)将成为我们的首要的关注点。您已经知道了许多可以帮助减轻过度拟合的技术,例如Dropout和权重衰减(L2正规化)。我们现在要引入一个新的,特定于电脑视觉影像,并在使用深度学习模型处理图像时几乎普遍使用的技巧:数据扩充(data augmentation)。

使用数据扩充

过度拟合(overfitting)是由于样本数量太少而导致的,导致我们无法训练能够推广到新数据的模型。

给定无限数据,我们的模型将暴露在手头的数据分布的每个可能的方面:我们永远不会过度的。数据增加采用从现有训练样本生成更多训练数据的方法,通过产生可信的图像的多个随机变换来“增加”样本。目标是在训练的时候,我们的模型永远不会再看到完全相同的画面两次。这有助于模型暴露于数据的更多方面,并更好地推广。

在Keras中,可以通过配置对我们的ImageDataGenerator实例读取的图像执行多个随机变换来完成。让我们开始一个例子:

datagen = ImageDataGenerator (

rotation_range = 40 ,

width_shift_range = 0.2 ,

height_shift_range = 0.2 ,

shear_range = 0.2 ,

zoom_range = 0.2 ,

horizontal_flip = True ,

fill_mode = 'nearest' )

这些只是列出一些可用的选项(更多选项资讯,请参阅Keras文档)。我们快速看一下这些参数:

- rotation_range是以度(0-180)为单位的值,它是随机旋转图片的范围。

- width_shift和height_shift是范围(占总宽度或高度的一小部分),用于纵向或横向随机转换图片。

- shear_range用于随机剪切变换。

- zoom_range用于随机放大图片内容。

- horizontal_flip用于在没有水平不对称假设(例如真实世界图片)的情况下水平地随机翻转一半图像。

- fill_mode是用于填充新创建的像素的策略,可以在旋转或宽/高移位后显示。

我们来看看我们的增强后的图像:

import matplotlib.pyplot as plt

from keras.preprocessing import image

#取得训练资料集中猫的档案列表

fnames = [ os . path . join ( train_cats_dir , fname ) for fname in os . listdir ( train_cats_dir )]

#取一个图像

img_path = fnames [ 3 ]

#读图像并进行大小处理

img = image . load_img ( img_path , target_size = ( 150 , 150 ))

#转换成Numpy array并且shape (150, 150, 3)

x = image . img_to_array ( img )

#重新Reshape成(1, 150, 150, 3)以便输入到模型中

x = x . reshape (( 1 ,) + x . shape )

#透过flow()方法将会随机产生新的图像

#它会无限循环,所以我们需要在某个时候“断开”循环

i = 0

for batch in datagen . flow ( x , batch_size = 1 ):

plt . figure ( i )

imgplot = plt . imshow ( image . array_to_img ( batch [ 0 ]))

i += 1

if i % 4 == 0 :

break

plt . show ()

如果我们使用这种数据增强配置来训练一个新的网络,我们的网络将永远不会看到相同重覆的输入。然而,它看到的输入仍然是相互关联的,因为它们来自少量的原始图像- 我们不能产生新的信息,我们只能重新混合现有的信息。因此,这可能不足以完全摆脱过度拟合(overfitting)。为了进一步打击过度拟合(overfitting),我们还将在密集连接(densely-connected)的分类器之前添加一个Dropout层:

model = models . Sequential ()

model . add ( layers . Conv2D ( 32 , ( 3 , 3 ), activation = 'relu' ,

input_shape = ( 150 , 150 , 3 )))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Conv2D( 64 , ( 3 , 3 ), activation = 'relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Conv2D ( 128 , ( 3 , 3 ), activation = ' relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2)))

model . add ( layers . Conv2D ( 128 , ( 3 , 3 ), activation = 'relu' ))

model . add ( layers . MaxPooling2D (( 2 , 2 )))

model . add ( layers . Flatten () )

model . add ( layers . Dropout ( 0.5 ))

model . add( layers . Dense ( 512 , activation = 'relu' ))

model . add ( layers . Dense ( 1 , activation = 'sigmoid' ))

model . compile ( loss = 'binary_crossentropy' ,

optimizer = optimizers . RMSprop ( lr = 1e-4 ),

metrics = [ 'acc' ])

我们使用数据扩充(data augmentation)和dropout来训练我们的网络:

train_datagen = ImageDataGenerator (

rescale = 1. / 255 ,

rotation_range = 40 ,

width_shift_range = 0.2 ,

height_shift_range = 0.2 ,

shear_range = 0.2 ,

zoom_range = 0.2 ,

horizontal_flip = True ,)

test_datagen = ImageDataGenerator ( rescale = 1. / 255 )

train_generator = train_datagen . flow_from_directory (

#这是图像资料的目录

train_dir ,

#所有的图像大小会被转换成150x150

target_size = ( 150 , 150 ),

batch_size = 32 ,

#由于这是一个二元分类问题, y的lable值也会被转换成二元的标签

class_mode = 'binary' )

validation_generator = test_datagen . flow_from_directory (

validation_dir ,

target_size = ( 150 , 150 ),

batch_size = 32 ,

class_mode = 'binary' )

history = model . fit_generator (

train_generator ,

steps_per_epoch = 100 ,

epochs = 50 ,

validation_data = validation_generator ,

validation_steps = 50 )

Found 2000 images belonging to 2 classes.

Found 1000 images belonging to 2 classes.

Epoch 1/50

100/100 [==============================] – 21s 208ms/step – loss: 0.6937 – acc: 0.5078 – val_loss : 0.6878 – val_acc: 0.4848

Epoch 2/50

100/100 [==============================] – 19s 189ms/step – loss: 0.6829 – acc: 0.5572 – val_loss : 0.6779 – val_acc: 0.5647

Epoch 3/50

100/100 [==============================] – 19s 189ms/step – loss: 0.6743 – acc: 0.5816 – val_loss : 0.6520 – val_acc: 0.6136

Epoch 4/50

100/100 [==============================] – 19s 189ms/step – loss: 0.6619 – acc: 0.6122 – val_loss : 0.6348 – val_acc: 0.6218

Epoch 5/50

100/100 [==============================] – 19s 189ms/step – loss: 0.6448 – acc: 0.6338 – val_loss : 0.6166 – val_acc: 0.6428

Epoch 6/50

100/100 [==============================] – 19s 187ms/step – loss: 0.6342 – acc: 0.6453 – val_loss : 0.6137 – val_acc: 0.6656

Epoch 7/50

100/100 [==============================] – 19s 189ms/step – loss: 0.6255 – acc: 0.6525 – val_loss : 0.5948 – val_acc: 0.6713

Epoch 8/50

100/100 [==============================] – 19s 188ms/step – loss: 0.6269 – acc: 0.6444 – val_loss : 0.5899 – val_acc: 0.6891

Epoch 9/50

100/100 [==============================] – 19s 187ms/step – loss: 0.6105 – acc: 0.6691 – val_loss : 0.6440 – val_acc: 0.6313

Epoch 10/50

100/100 [==============================] – 19s 189ms/step – loss: 0.5952 – acc: 0.6794 – val_loss : 0.6291 – val_acc: 0.6263

Epoch 11/50

100/100 [==============================] – 21s 209ms/step – loss: 0.5926 – acc: 0.6850 – val_loss : 0.5518 – val_acc: 0.7049

Epoch 12/50

100/100 [==============================] – 19s 193ms/step – loss: 0.5830 – acc: 0.6844 – val_loss : 0.5418 – val_acc: 0.7234

Epoch 13/50

100/100 [==============================] – 19s 189ms/step – loss: 0.5839 – acc: 0.6903 – val_loss : 0.5382 – val_acc: 0.7354

Epoch 14/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5663 – acc: 0.6944 – val_loss : 0.5891 – val_acc: 0.6662

Epoch 15/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5620 – acc: 0.7175 – val_loss : 0.5613 – val_acc: 0.6923

Epoch 16/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5458 – acc: 0.7228 – val_loss : 0.4970 – val_acc: 0.7582

Epoch 17/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5478 – acc: 0.7106 – val_loss : 0.5104 – val_acc: 0.7335

Epoch 18/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5479 – acc: 0.7250 – val_loss : 0.4990 – val_acc: 0.7544

Epoch 19/50

100/100 [==============================] – 19s 189ms/step – loss: 0.5390 – acc: 0.7275 – val_loss : 0.4918 – val_acc: 0.7557

Epoch 20/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5391 – acc: 0.7209 – val_loss : 0.4965 – val_acc: 0.7532

Epoch 21/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5379 – acc: 0.7262 – val_loss : 0.4888 – val_acc: 0.7640

Epoch 22/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5168 – acc: 0.7400 – val_loss : 0.5499 – val_acc: 0.7056

Epoch 23/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5250 – acc: 0.7369 – val_loss : 0.4768 – val_acc: 0.7697

Epoch 24/50

100/100 [==============================] – 19s 189ms/step – loss: 0.5088 – acc: 0.7359 – val_loss : 0.4716 – val_acc: 0.7766

Epoch 25/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5218 – acc: 0.7359 – val_loss : 0.4922 – val_acc: 0.7544

Epoch 26/50

100/100 [==============================] – 19s 187ms/step – loss: 0.5143 – acc: 0.7391 – val_loss : 0.4687 – val_acc: 0.7716

Epoch 27/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5111 – acc: 0.7494 – val_loss : 0.4637 – val_acc: 0.7671

Epoch 28/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4974 – acc: 0.7506 – val_loss : 0.4899 – val_acc: 0.7557

Epoch 29/50

100/100 [==============================] – 19s 188ms/step – loss: 0.5136 – acc: 0.7463 – val_loss : 0.5077 – val_acc: 0.7557

Epoch 30/50

100/100 [==============================] – 19s 190ms/step – loss: 0.5019 – acc: 0.7559 – val_loss : 0.4595 – val_acc: 0.7830

Epoch 31/50

100/100 [==============================] – 19s 188ms/step – loss: 0.4961 – acc: 0.7628 – val_loss : 0.4805 – val_acc: 0.7709

Epoch 32/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4925 – acc: 0.7638 – val_loss : 0.4463 – val_acc: 0.7874

Epoch 33/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4783 – acc: 0.7700 – val_loss : 0.4667 – val_acc: 0.7824

Epoch 34/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4792 – acc: 0.7738 – val_loss : 0.4307 – val_acc: 0.8084

Epoch 35/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4774 – acc: 0.7753 – val_loss : 0.4269 – val_acc: 0.8027

Epoch 36/50

100/100 [==============================] – 19s 191ms/step – loss: 0.4756 – acc: 0.7725 – val_loss : 0.4642 – val_acc: 0.7652

Epoch 37/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4796 – acc: 0.7684 – val_loss : 0.4349 – val_acc: 0.7995

Epoch 38/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4895 – acc: 0.7665 – val_loss : 0.4588 – val_acc: 0.7836

Epoch 39/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4832 – acc: 0.7694 – val_loss : 0.4243 – val_acc: 0.8001

Epoch 40/50

100/100 [==============================] – 19s 191ms/step – loss: 0.4678 – acc: 0.7772 – val_loss : 0.4442 – val_acc: 0.7773

Epoch 41/50

100/100 [==============================] – 19s 188ms/step – loss: 0.4623 – acc: 0.7797 – val_loss : 0.4565 – val_acc: 0.7874

Epoch 42/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4668 – acc: 0.7697 – val_loss : 0.5352 – val_acc: 0.7297

Epoch 43/50

100/100 [==============================] – 19s 191ms/step – loss: 0.4612 – acc: 0.7906 – val_loss : 0.4236 – val_acc: 0.7951

Epoch 44/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4598 – acc: 0.7816 – val_loss : 0.4343 – val_acc: 0.7893

Epoch 45/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4553 – acc: 0.7881 – val_loss : 0.4315 – val_acc: 0.7970

Epoch 46/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4621 – acc: 0.7734 – val_loss : 0.4303 – val_acc: 0.8027

Epoch 47/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4516 – acc: 0.7912 – val_loss : 0.4099 – val_acc: 0.8065

Epoch 48/50

100/100 [==============================] – 19s 190ms/step – loss: 0.4524 – acc: 0.7822 – val_loss : 0.4088 – val_acc: 0.8115

Epoch 49/50

100/100 [==============================] – 19s 189ms/step – loss: 0.4508 – acc: 0.7944 – val_loss : 0.4048 – val_acc: 0.8128

Epoch 50/50

100/100 [==============================] – 19s 188ms/step – loss: 0.4368 – acc: 0.7953 – val_loss : 0.4746 – val_acc: 0.7722

我们来保存我们的模型- 我们将在convnet可视化里使用它。

model.save ( 'cats_and_dogs_small_2.h5' )

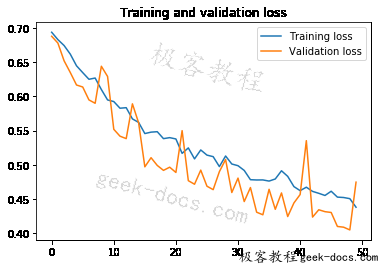

我们再来看一遍我们的结果:

acc = history . history [ 'acc' ]

val_acc = history . history [ 'val_acc' ]

loss = history . history [ 'loss' ]

val_loss = history . history [ 'val_loss' ]

epochs = range ( len ( acc ))

plt . plot ( epochs , acc , label = 'Training acc' )

plt . plot ( epochs , val_acc , label = 'Validation acc' )

plt . title ( 'Training and validation accuracy' )

plt . legend ()

plt . figure ()

plt . plot ( epochs , loss , label = 'Training loss' )

plt . plot ( epochs , val_loss , label = 'Validation loss' )

plt . title ( 'Training and validation loss' )

plt . legend ()

plt . show ()

由于数据增加(data augmentation)和丢弃(dropout)的使用,我们不再有过度拟合(overfitting)的问题:训练曲线相当密切地跟随着验证曲线。我们现在能够达到82%的准确度,比非正规化的模型相比改善了15%。

通过进一步利用正规化技术,及调整网络参数(例如每个卷积层的滤波器数量或网络层数),我们可以获得更好的准确度,可能高达86 ~ 87%。然而,只要我们从头开始训练我们自己的卷积网络(convnets),我们可以证明使用这么少的数据要来训练出一个准确率高的模型是非常困难的。为了继续提高我们模型对这个问题的准确性,下一步我们将利用预先训练的模型(pre-trained model)来进行操作。

总结

在这篇文章中有一些个人学习到的一些有趣的重点:

- 善用数据扩充(Data Augmentation)对训练资料不多的图像辨识可以提升效能

- Dropout的使用可以抑制过拟合(overfitting)的问题

极客教程

极客教程